Claude Code Insights

A look at what's working and what's not after 155 sessions and 1,338 messages with Claude Code as a development partner

Thanks /insights - I always appreciate help on the way to get 1% or more better every day.

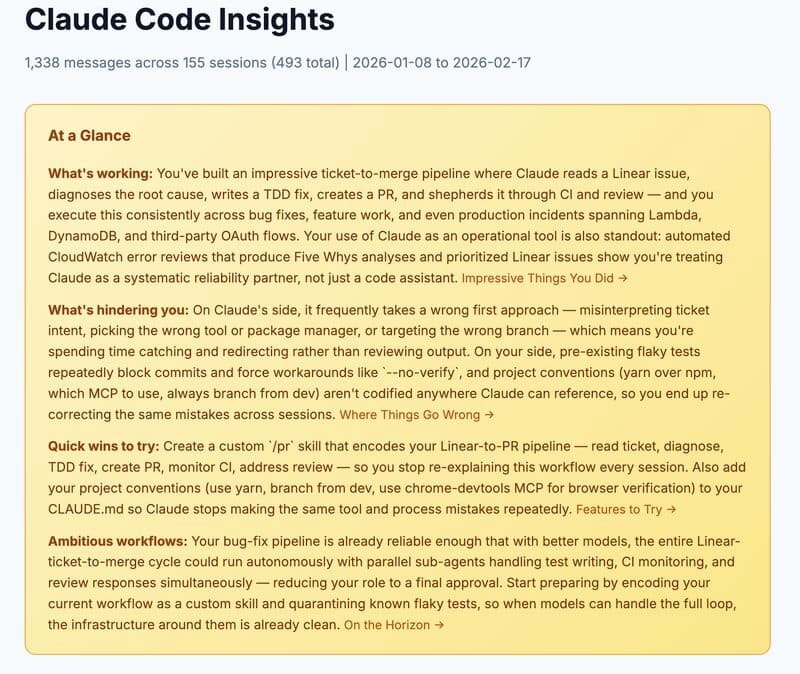

Claude Code Insights

1,338 messages across 155 sessions (493 total) | 2026-01-08 to 2026-02-17

At a Glance

What's working: You've built an impressive ticket-to-merge pipeline where Claude reads a Linear issue, diagnoses the root cause, writes a TDD fix, creates a PR, and shepherds it through CI and review — and you execute this consistently across bug fixes, feature work, and even production incidents spanning Lambda, DynamoDB, and third-party OAuth flows. Your use of Claude as an operational tool is also standout: automated CloudWatch error reviews that produce Five Whys analyses and prioritized Linear issues show you're treating Claude as a systematic reliability partner, not just a code assistant.

What's hindering you: On Claude's side, it frequently takes a wrong first approach — misinterpreting ticket intent, picking the wrong tool or package manager, or targeting the wrong branch — which means you're spending time catching and redirecting rather than reviewing output. On your side, pre-existing flaky tests repeatedly block commits and force workarounds like '--no-verify', and project conventions (yarn over npm, which MCP to use, always branch from dev) aren't codified anywhere Claude can reference, so you end up re-correcting the same mistakes across sessions.

Quick wins to try: Create a custom '/pr' skill that encodes your Linear-to-PR pipeline — read ticket, diagnose, TDD fix, create PR, monitor CI, address review — so you stop re-explaining this workflow every session. Also add your project conventions (use yarn, branch from dev, use chrome-devtools MCP for browser verification) to your CLAUDE.md so Claude stops making the same tool and process mistakes repeatedly.

Ambitious workflows: Your bug-fix pipeline is already reliable enough that with better models, the entire Linear ticket-to-merge cycle could run autonomously with parallel sub-agents handling test writing, CI monitoring, and review responses simultaneously — reducing your role to a final approval. Start preparing by encoding your current workflow as a custom skill and quarantining known flaky tests, so when models can handle the full loop, the infrastructure around them is already clean.

Get More Like This

Follow along as I build and share what I learn

Found this helpful? Share it with your network!